REPROGRAMMING FREQUENCIES: SONIFYING THERMAL EMOTIONAL MAPS TO PERSONALISED SOUNDS, CYMATICS, & GEOMETRY

An AshZero Workflow

The AshZero project builds on existing research in affective computing and emotion recognition, integrating thermal imaging, sound sonification, and cymatics with the timeless blueprints of ancient sound sciences (Tantra, Ayurveda, Veda, Yoga) to build novel tools towards decrypting and transforming individual human emotional experiences.

Abstract

In a rapidly evolving world influenced by artificial intelligence and social media, personalised emotional reprogramming is essential for decision-making rooted in self-worth and adaptability. As we navigate complex social landscapes, the personalised approach offers a dynamic alternative to the dependent psychotherapy/counselling/coaching, which often inadvertently reinforces limiting beliefs and conditioned responses within established frameworks of societal norms, ultimately disconnecting individuals from their unique emotional imprint, affecting creative potential and preventing perspective shifts.

AshZero’s workflow integrates timeless blueprints from Ayurveda, Yoga, and Tantra which focus on personalised emotional reprogramming harnessing individual traits through lifestyle, sound and breath in the form of dinacharya, mantra and prana flow reflecting the profound connection between emotional intelligence and neuroplasticity—the brain's ability to reorganise itself by forming new neural pathways.

This workflow facilitates personalised emotional expansion by leveraging advanced technologies such as thermal imaging, sound sonification, sound to art patterns, and sound to colour-emotion mapped cymatics and geometrics, working non-invasively towards emotional reprogramming.

The AshZero workflow structure is rooted in over 20 years of applied research in ethnomedicine and documentation and practice of traditional Yoga, Tantra and Ayurveda by Dr. Sumit Ashok Kesarkar.

Key elements of the AshZero workflow:

Thermal Imaging for Emotional Insight: Utilises thermal imaging to capture physiological changes linked to emotional states, offering an unbiased view of individual emotions through heat signatures.

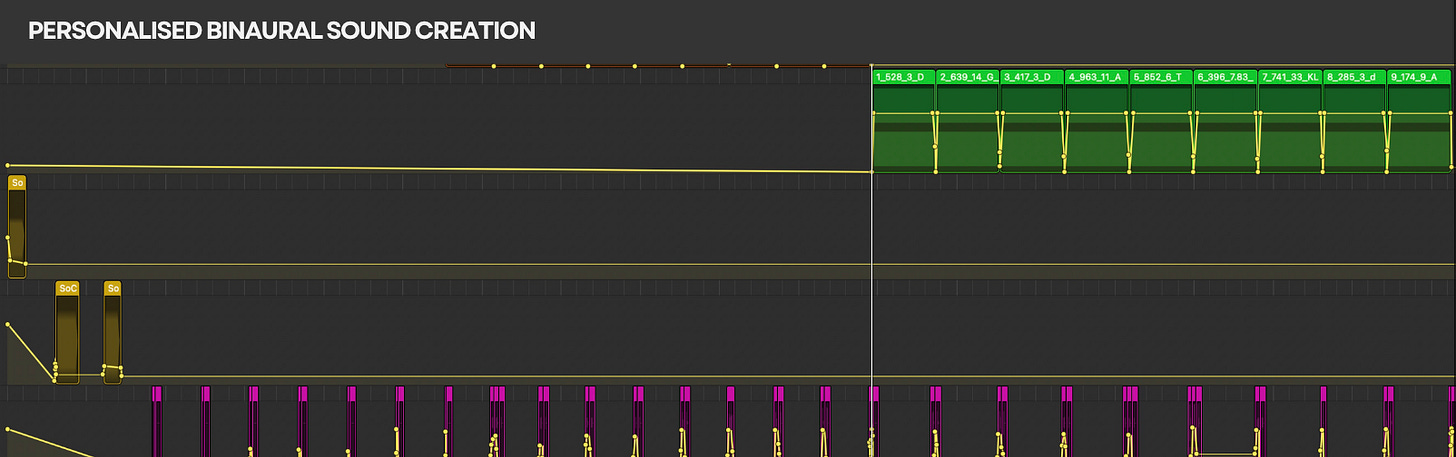

Sonification of Thermal Emotional Data: Employs mathematical sound blueprints from Ayurveda, Yoga, and Tantra to sonify thermal emotional data into unique binaural beats with individualised frequencies that correspond to bija mantra dynamics and brain wave patterning, activating sensory reprogramming.

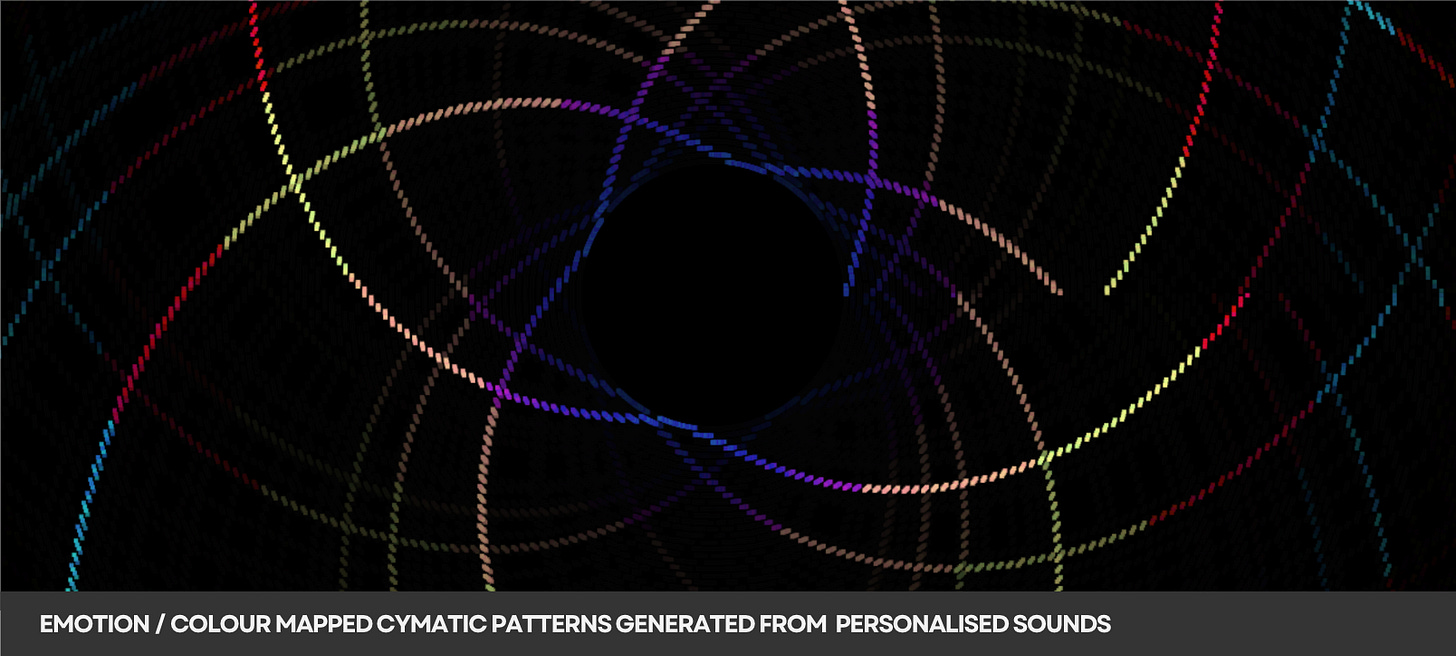

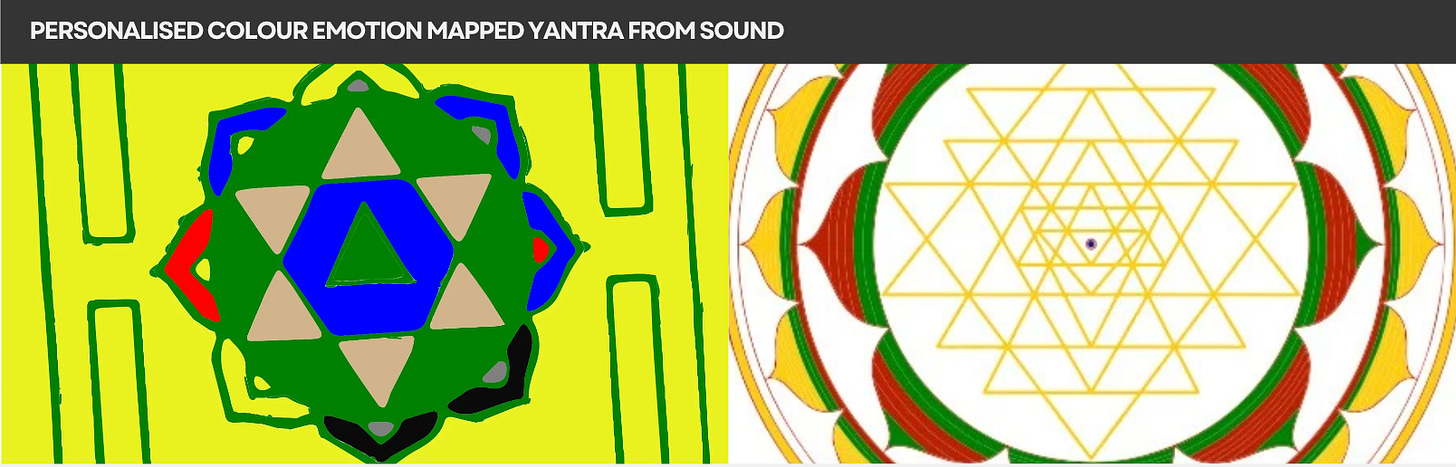

Cymatics and Visual Geometry: Translates personalised sounds into visual patterns like cymatics and yantras, colour-mapped to emotions based on Bharata's Rasa theory and contemporary colourgenics for a highly immersive experience.

Personalised Breath Work: Integrates tailored breath patterns that adhere to mathematical precision of prana unit and marma distribution which catalyses the emotional reprogramming process.

Artistic Representation of Emotions & Dynamic Self-Reflection: Transforms emotional voice patterns into immersive art which maps emotional intelligence patterning over time, providing opportunities for self-reflection.

Role In therapeutics: Under supervision, the framework shows promise in addressing various behavioural disorders including anxiety, depression, OCD, PTSD, ADHD etc and serves as an adjunct in cognitive therapies for Aphasia, Dementia, Alzheimer’s, TBI, ASD etc

Empowerment and Autonomy: Without external frameworks, individuals can explore emotional reprogramming on their own terms, promoting self-discovery without the pressures of interpersonal judgments or societal expectations, and breaking conditioned responses, leading to adaptive decision-making, and a deeper understanding of emotional triggers and alternative responses or perspectives.

To know more about the detailed workflow, read the full blog below ...

NOTE: All references cited below. Sonification

Sonification is the process of converting data, such as thermal images or light captured from various mediums, into sound, providing a more intuitive and personalised way to experience and analyse information depending on the context of use. Sonification is increasingly utilised in various fields, from space research and environmental monitoring, to healthcare.

For example:

NASA's Hubble Space Telescope and Chandra X-ray Observatory have implemented sonification techniques to translate the brightness, colour, and position of celestial objects into sound. Here elements like brightness are represented by volume, while colour can dictate pitch, creating a rich auditory landscape that reflects the universe's intricacies.

Researchers have used sonification to analyse soil health, translating data on nutrient levels and moisture into sound. This auditory feedback can help farmers and scientists make informed decisions about land management. Additionally, in artificial intelligence and machine learning, sonification can assist in interpreting complex datasets, making patterns more discernible through sound.

The use of sonification to represent heart rate as electrocardiogram (ECG) signals, brain waves as EEG and muscle signala and or EMG sounds is well known. Sonification is also being explored as an alternative to visual feedback in surgical navigation systems. By converting spatial data into sound, surgeons can receive guidance cues through audio feedback while performing procedures. This can help overcome limitations of visual-centric navigation, such as change blindness and inattentional blindness. Researchers have used sonification to uncover hidden details in medical images, such as vessels, organs or tumours that are difficult to see with the naked eye. By converting image data into sound, healthcare professionals can potentially detect subtle features that may be indicative of disease or abnormalities.

Sonification has also been shown to be effective in enhancing athletic performance and rehabilitating stroke patients. By converting movement data into sound, athletes and patients can receive real-time auditory feedback to improve their form and monitor their progress. The sounds can be designed to encourage desired movement patterns.

Sonification and Emotional Maps

Sonification is increasingly being utilised to detect and convey emotional data, offering innovative approaches to understanding human emotions. One notable example is the research conducted by Rönnberg (2021), which explores the sonification of running data to convey both information and emotional states. In this study, various acoustic features—such as tempo, loudness, and decay—were manipulated to reflect emotional arousal and valence, allowing participants to experience emotional feedback through sound. This approach demonstrates how sonification can effectively translate emotional data into auditory signals, facilitating a deeper understanding of emotional responses during physical activities.

Another significant study by the Cambridge University Press discussing strategies for sonifying emotions by utilising acoustic and structural cues to target specific auditory-cognitive mechanisms. The research highlights the relationship between sonification and music, illustrating how sound can evoke emotional responses and enhance emotional communication. By employing different design methodologies, the study showcases how sonification can be tailored to convey distinct emotional states, thereby enriching the emotional experience of users.

These examples underscore the potential of sonification as a powerful tool for detecting and interpreting emotional data, paving the way for applications in various fields, including mental health, sports, and human-computer interaction.

The Tantra Ayurveda Blueprints of Sound

The use of sound towards individualised emotional expansion is not new. It has been an integral part of the foundational sciences of Tantra, Ayurveda, Veda, Yoga and many other spiritual sciences throughout history.

The use of seed sounds or Bija Mantra phonetics through rituals are documented to facilitate emotional expansion and personal transformation. Through recitation and visualisation, practitioners engage in a process that aligns their mental and emotional states with these vibrational frequencies, likely reprogramming their brain and hormonal pathways towards deconditioned emotional states.

The practice of sound, particularly through bija mantras and other sacred syllables, has long been personalised for each disciple by teachers according to an individual’s individual constitution across various spiritual traditions - selecting specific seed sounds that resonate with their unique energetic patterns towards physiological and brain pattern entrainment. These sound techniques are tailored to the unique genotype and phenotype, or prakriti, of an individual, allowing for a deeper connection to their emotional and sensory expansion. These personalised practices, which integrate sound, breath, and visualisation, have been integral to unbroken traditions throughout history.

These seed sounds, similar to binaural beats, create auditory illusions that influence brainwave patterns, and the repetition of bija mantras can induce specific cognitive and emotional states. This sonification effect stimulates the brain, promoting relaxation, focus, and heightened awareness.

Emotional Brain Wave Mapping and Sonification from Thermal Imagery

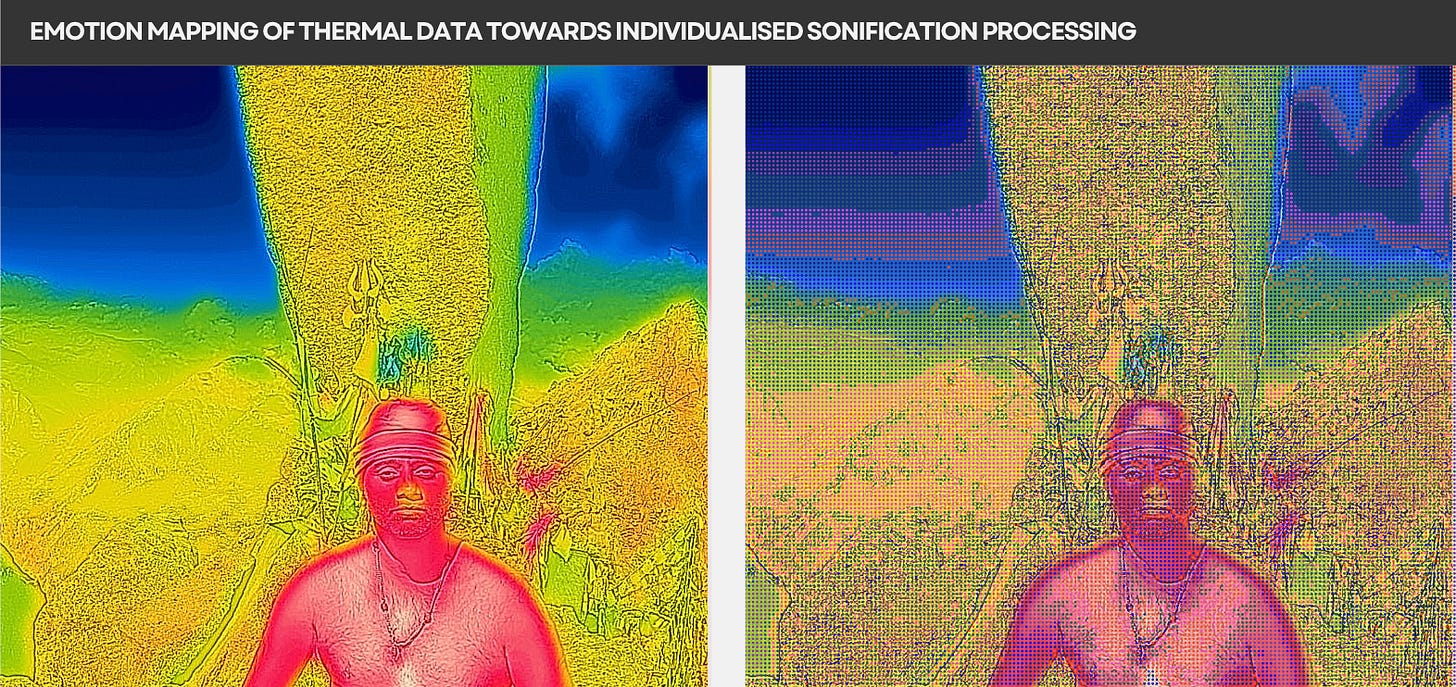

The AshZero project has engineered a process of analysing thermal images to assess human emotions integrated with sonification techniques. By extracting features from thermal and regular imagery, such as temperature variations and facial expressions, a model is being developed to classify emotions. This classification is then sonified, creating sounds that correspond to specific emotional states.

Existing literature supports the efficacy of thermal imaging in emotion recognition, with studies achieving high accuracy rates in identifying emotional states using machine learning techniques. Studies have also explored the use of thermal imaging for emotion analysis, demonstrating its potential in various applications, from healthcare to human-computer interaction. Furthermore, the use of sound in therapeutic contexts has been documented, indicating that specific auditory stimuli can promote emotional healing and well-being .

Research has shown that emotional experiences generate unique physiological signals, including variations in temperature and blood flow, which can be effectively captured through thermal imaging. For example, a study demonstrated the efficacy of infrared thermal imaging (IRTI) in recognising emotions by analysing facial emissivity variations, achieving classification accuracies greater than 85% for different emotional states like happiness and sadness.

Additionally, incorporating brain wave data (e.g., EEG signals) into this framework can enhance the understanding of how emotions affect decision-making and social behaviour.

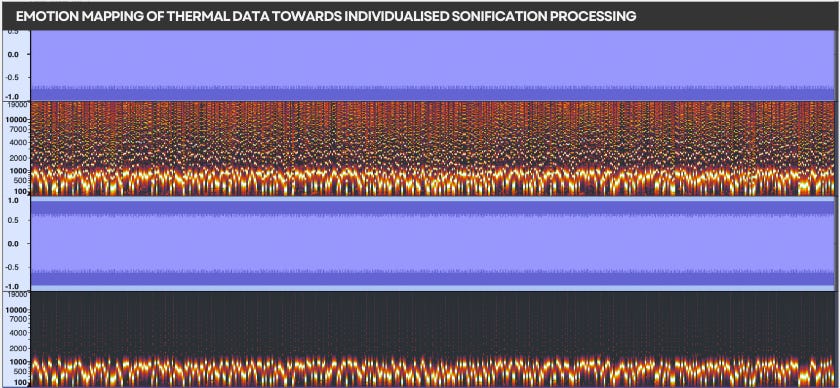

The sonification process involves mapping the emotional data extracted from thermal images to sound characteristics, creating individualised auditory experiences. By doing so, the program can support individuals to access and understand their unique emotional states more profoundly, potentially influencing the social moral choices that define their reality. This is supported by research indicating that sound can significantly impact emotional responses and decision-making processes.

Binaural sounds created from the sonified emotional data may lead to shifts in brain activity, fostering an adaptive dynamism in individuals as they navigate a constantly changing world.

Project Overview: Thermal Image Sonic Emotion Mapping

The foundation of this AshZero project lies in the science of sound and mantra, which have been utilised in various traditions to facilitate emotional and psychological transformation.

By incorporating these mathematical sound blueprints, the program aims to sonify thermal images—capturing the emotional states of individuals—into unique sounds.

The sounds are then processed and filtered to create frequencies that correspond to specific seed mantras that are generated by the brain when it is listened to through exposure to binaural frequencies.

Mantras are not just auditory elements; they are deeply connected to brain wave patterns that reflect raw emotional states outside of their conditioned experience.

Effort-based techniques can often yield limited or target-oriented results, which may precisely remain within the same domain of conditioning. Independent of bias, judgments that can come through interpersonal relationships, academic knowledge or targets, these personalised sounds are encoded with brain frequencies which can be accessed through the simple process of listening, and in further stages, observing the cymatics and visuals mapped to them. Beyond any targeted results, the effortless emotional reprogramming of absorbing sound and geometry becomes apparent in daily life and decision making as that reprogramming guides us.

This innovative approach seeks to understand and potentially transform human social and moral behaviour by breaking limited attachments to life patterns and fostering integrity and independence.

This project has now developed a unique system that captures thermal data from individuals in various emotional settings, both controlled and uncontrolled, and sonifies this data to create an immersive auditory experience.

The process begins with the acquisition of thermal images, which are then analysed to extract emotional states that cannot be manipulated, as heat signatures and geometry are not manipulable by self-image projections. The sonification of these emotional states generates sound that is further processed to extract binaural beat frequencies corresponding to brain wave patterns and seed mantras.

The project, from its current various prototype tools, aims to develop an all inclusive tool for mapping emotions from thermal and regular images to brain activity, creating a comprehensive sonification experience.

Building on these tools, the project aims at further leveraging machine learning for emotion classification and integrating auditory feedback, and thus seeks to enhance the understanding of human emotions and their impact on decision-making.

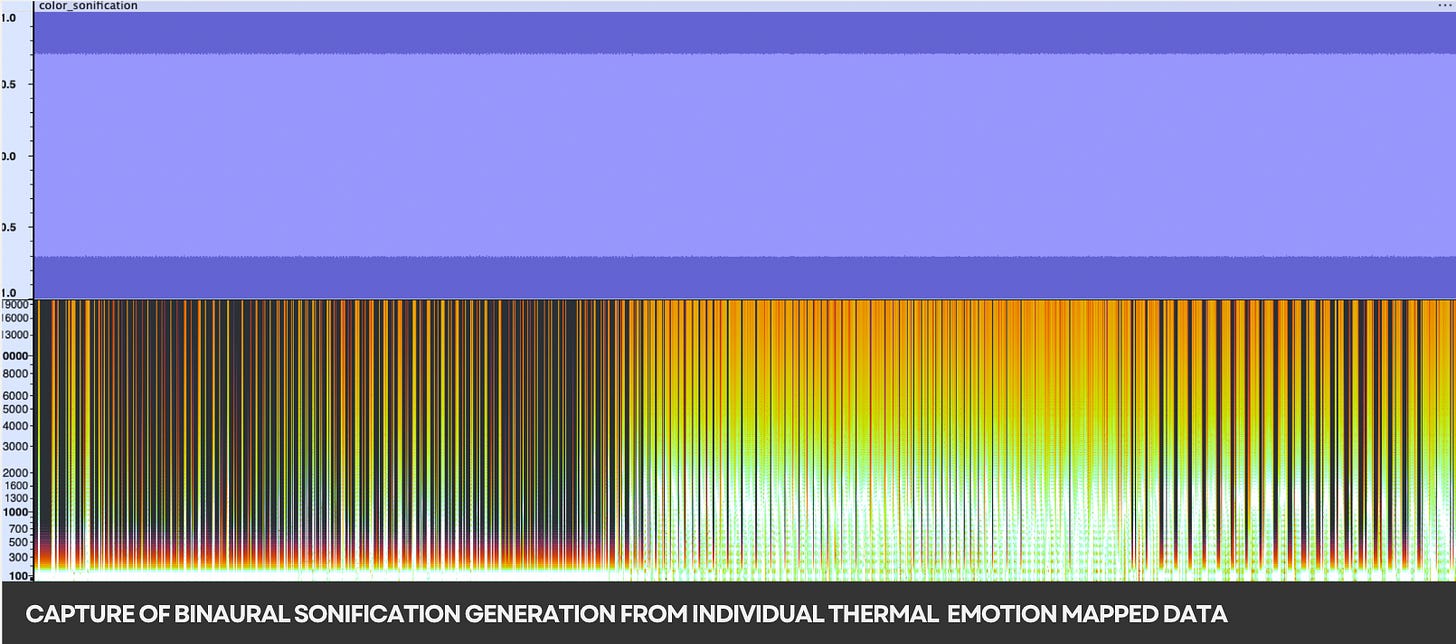

By mapping the sonified thermal images to specific binaural beat frequencies corresponding to brain wave patterns, the AshZero project aims to create an immersive auditory experience that resonates with the listener's emotional state, expanding upon the codified restrictions the brainwaves may have been limited to through conditioning.

The ultimate goal is to create an interactive platform where users can experience individualised emotional data through sound and emotion brainwave to colour mapped cymatics patterns and geometrics (yantra), potentially leading to new insights into human consciousness and social behaviour.

Immersive Thermal Imaging, Sonification, Emotion to Colour Mapped Cymatic Patterns and Geometry

Creating binaural audio from the sonified emotional data and brain wave patterns could lead to significant insights into human consciousness and decision-making. Binaural sounds provide a 3D auditory experience, which can enhance emotional engagement and cognitive processing. When these binaural sounds are processed through a program that generates cymatics—the visual representation of sound vibrations—the impact becomes even more profound.

Cymatics can take various forms, from intricate geometric patterns to fluid movements. By synchronising the cymatics with binaural sounds, the AshZero project creates a synaesthetic experience that engages multiple senses simultaneously. Studies have shown that multi-sensory stimulation can enhance emotional processing and decision-making, as the brain integrates information from different modalities to form a more comprehensive understanding of reality.

The AshZero tools also add the functionality to enable these sounds to be visualised with colourgenics or emotion colour-mapped geometries, such as yantras. These yantras are individually generated by analysing each individual's genotype + phenotype imprint and set in motion to create a dynamic experience that is uniquely individualised, providing a deeper, profound effect on emotional and sensory states.

Syncing Sound to Unique Breath Patterns

In personalised immersions, individuals engage with binaural sounds created from their sonified thermal images, which are then paired with personalised breath patterns designed using the mathematical blueprints of Prana units mapped to their unique genotype + phenotype imprint.

These breath patterns are not random; but tailored to each individual, allowing them to emulate specific respiratory techniques that can positively impact their nervous, hormonal, and sensory systems.

Research has shown that controlled breathing techniques can significantly influence physiological responses, improving emotional regulation and overall health. By integrating these individualised breath patterns with the immersive experience of sound and cymatics, the AshZero project aims to create a profound sensory journey that reprograms emotional access, leading to a more enhanced and expansive decision-making processes.

Converting Audio Frequencies to Emotion Mapped Art

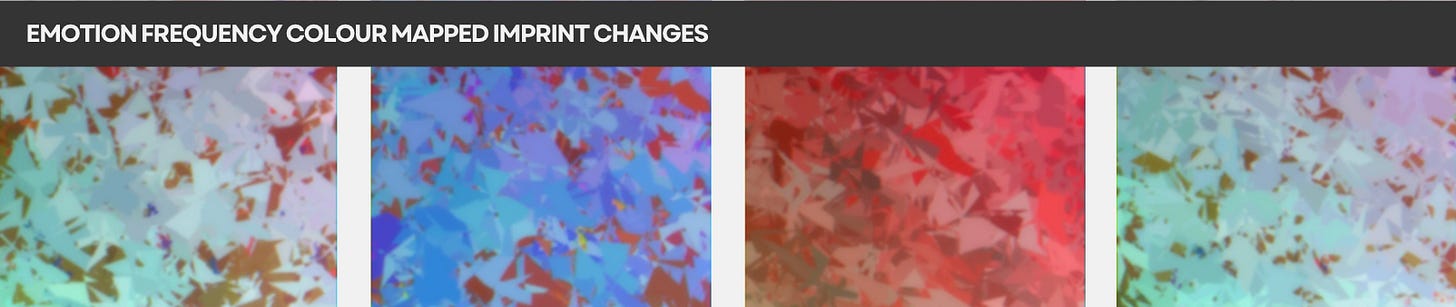

The AshZero workflow adds a layer to emotional reprogramming by converting distinct frequencies in emotion based voice samples of individuals to immersive art. Individual emotion curated voice files are analysed for distinct frequencies which are then mapped to specific emotions (aesthetics / Rasa) based on the Bharata Rasa theory.

For instance, low frequencies might evoke feelings of compassion, while higher frequencies could represent joy or anger. By translating these frequencies into colours and shapes, the AshZero workflow creates a visual representation of the emotional diaspora from sound samples . This not only enhances the aesthetic appeal, but also allows for a more immersive experience.

Viewing these emotion-mapped images can have a reprogramming effect on the brain. The combination of sound and visual stimuli can evoke memories, feelings, and even physiological responses. This multi-sensory experience can enhance emotional awareness and promote a deeper understanding of one's feelings. The abstract nature of the artwork allows for personal interpretation, inviting viewers to engage with the immersive art pieces on a more intimate level. As they observe the vibrant colours and dynamic shapes, they may find themselves reflecting on their own emotional states, leading to a synaesthetic experience making them more aligned with their raw emotional states removing conditioning and limited self-perception. Over time as the brain reprograms itself, this workflow generates different colour mapped art through changes in patterning of frequencies the user sub consciously imbibes through emotional reprogramming which creates a dynamic shift in their decision making process.

Project Overview - Methodology

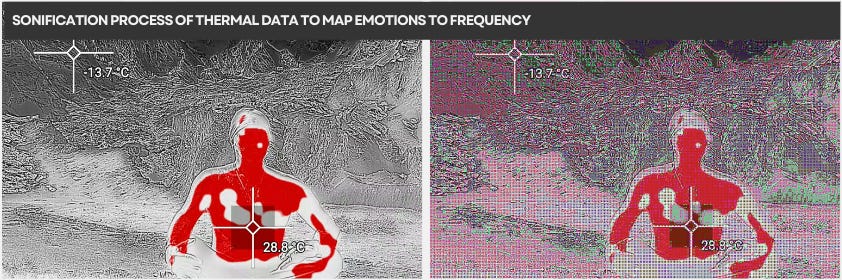

1. Thermal Data Capture:

The project captures thermal images of individuals expressing emotions in different contexts. Research indicates that thermal imaging can effectively and without bias identify emotional states due to the physiological changes that accompany different feelings, such as variations in facial temperature and blood flow.

2. Sonification Process:

The captured thermal data is sonified to create soundscapes that reflect the emotional states of the individuals. This involves mapping specific thermal features to sound parameters, allowing for a direct auditory representation of emotional data. The resulting sound is then processed to extract binaural beat frequencies, which are known to correspond to specific brainwave patterns associated with various emotional states. The brainwave patterns can be attributed to select bija mantra resonance and frequencies which are accessed similarly as binaural beats

3. Dynamic Arrangement:

The binaural sounds are dynamically arranged using immersive processing techniques such as Inter-aural Time Difference (ITD) and Inter-aural Level Difference (ILD). These techniques enhance the spatial audio experience, allowing listeners to perceive sound directionality and depth, which can significantly impact emotional engagement .

4. Cymatics and Geometric Visualisation:

The project further explores the generation of cymatics—visual patterns created by sound vibrations—by mapping the sonified sounds to geometrical representations, such as yantras. These visualisations can be correlated with emotional states and colour relativity, enhancing the immersive experience. Research has shown that visual stimuli can significantly influence emotional responses, making this integration particularly impactful.

5. Breath as a Catalyst: Individualised Prana Units:

In addition to the core methodologies outlined, the AshZero project incorporates individualised breath patterns that are integral to enhancing the immersive experience. These breath patterns are derived from the mathematical blueprints of Prana units (prana is the magnetic flow of breath, or vitality units we suck in from the environment), ensuring they are tailored to each participant's unique physiological and emotional profile. By emulating these personalised breathing techniques while engaging with the binaural sounds and cymatics, individuals can significantly influence their nervous, hormonal, and sensory systems. This integration of breath work with auditory and visual stimuli not only deepens the impact of the immersive experience, but extensively enhances deprogramming of emotional pathways.

6. Converting Emotion Audio to Emotion Mapped Art

Mapping the changes in voice patterns through emotion mapped samples over time by analysing samples for emotion frequencies and converting them to colour mapped visual art. These maps can be periodically studied to ascertain the reprogramming of emotional intelligence through application and usage of tools

Impact on Emotional Patterns:

Over time, the immersive experience created by the sonified sounds and visual patterns aims to expand sensory access, breaking linear and restrictive emotional patterns. This approach aligns with findings in psychology that suggest auditory and visual stimuli can facilitate emotional processing and transformation, promoting a more adaptive response to changing environments.

As listeners immerse themselves in the binaural sounds and cymatics generated from the sonified thermal images, they may find themselves experiencing a shift in their emotional state and decision-making processes.

The combination of auditory and visual stimuli, tailored to their unique brain wave patterns, can help individuals gain a deeper understanding of their emotions and the factors that influence their choices. This transformative experience can lead to greater self-awareness, emotional regulation, and adaptive decision-making in various aspects of life.

By aligning sound with the mathematical patterns of geometry, colour, and emotion, one can tap into a transformative experience that enhances their emotional and sensory capacities, mirroring the effects observed in modern sonification techniques such as binaural beats.

Role In Therapeutics

Under supervision, this framework has high potential to influence neural programming in behavioural disorders such generalised & social anxiety, panic disorder, depression, Obsessive-Compulsive Disorder (OCD), Post-Traumatic Stress Disorder (PTSD), Attention Deficit Hyperactivity Disorder (ADHD), Low Self-Esteem, Body Dysmorphic Disorder, Narcissistic /Avoidant /Dependent / Borderline Personality Disorder, Impulse Control Disorders, Adjustment Disorders. In addition, it can play a high adjuvant in the therapeutics of Cognitive disorders such as Aphasia, Dementia, Alzheimer’s Disease, Traumatic Brain Injury (TBI) and Autism Spectrum Disorder (ASD).

Summary

Sonification serves as a transformative tool for interpreting data, bridging the gap between abstract information and human experience. The AshZero project explores the intersection of thermal imaging, emotion recognition, auditory representation and sound science, colour-genics, and therapeutics, contributing to the evolving field of highly personalised healthcare by empowering individuals to access unique health options and emotional expansion, ultimately promoting autonomy and enhancing quality of life.

By sonifying thermal images and mapping them to brain wave patterns using mathematical blueprints of mantras, this project integrates ancient knowledge with contemporary technology to enhance emotional awareness and sensory expansion. This innovative approach empowers individuals to break free from external, linear perspectives often found in health coaching and counselling towards independence. By embracing their raw emotional traits without the constraints of societal judgments, participants can achieve a deeper understanding of their emotional landscapes, fostering a sense of fulfilment and authenticity.

Without an external framework or supervised hierarchy, this workflow empowers individuals to explore their emotional reprogramming on their own terms, promoting autonomy and self-discovery. This transparency allows individuals to explore without the pressure of interpersonal judgments or societal expectations, enabling the breaking of conditioned responses, leading to more adaptive decision-making and a deeper understanding of emotional triggers and alternative responses or perspectives.

Ultimately, the AshZero project aims to facilitate personal growth and social change, allowing individuals to navigate their emotional worlds with greater clarity and purpose.

REFERENCES

Basem Assiri, Mohammad Alamgir Hossain. (2023). Face emotion recognition based on infrared thermal imagery by applying machine learning and parallelism. *Mathematical Biosciences and Engineering*, 20(1), 913-929. [Link](https://doi.org/10.3934/mbe.2023042).

Cardone D, Merla A. New Frontiers for Applications of Thermal Infrared Imaging Devices: Computational Psychophysiology in the Neurosciences. Sensors (Basel). 2017;17(5):1042.

Cardone D, Merla A. The thermal dimension of psychophysiological and emotional responses revealed by thermal infrared imaging. Math Biosci Eng. 2023;20(5):7140-7169.

Cardone D, Pinti P, Merla A. Thermal infrared imaging-based computational psychophysiology for psychometrics. Comput Math Methods Med. 2015;2015:984353.

Caycedo Alvarez N. Sonification of EEG Signals for BCI Applications [Master's thesis]. University of Stuttgart; 2019.

Chandra X-ray Observatory. Galactic Center. Smithsonian Astrophysical Observatory. [Internet]. Available from: https://chandra.si.edu/sound/gcenter.html

Chandra X-ray Observatory. Sonification: Turning X-ray Light into Sound. Harvard-Smithsonian Center for Astrophysics. [Internet]. Available from: https://chandra.harvard.edu/blog/node/873

Chandra X-ray Observatory. Sonifications. Smithsonian Astrophysical Observatory. [Internet]. Available from: https://chandra.si.edu/sound/

Goulart C, Valadão C, Delisle-Rodriguez D, Caldeira E, Bastos T. Emotion analysis in children through facial emissivity of infrared thermal imaging. PLoS One. 2019;14(3):e0212928.

Huang Y, Chen F, Lv S, Wang X. Facial Expression Recognition: A Survey. Electronics. 2021;10(20):2519.

InfraTec. Thermography. InfraTec GmbH. [Internet]. Available from: https://www.infratec.eu/thermography/

Ioannou S, Gallese V, Merla A. Thermal infrared imaging in psychophysiology: Potentialities and limits. Psychophysiology. 2014;51(10):951-63.

Ioannou S, Morris P, Terry S, Baker M, Gallese V, Reddy V. Sympathy Crying: Insights from Infrared Thermal Imaging on a Female Sample. PLoS One. 2016;11(10):e0162749.

Jarlier S, Grandjean D, Delplanque S, N'Diaye K, Scherer KR, Vuilleumier P, et al. Thermal Analysis of Facial Muscles Contractions. IEEE Trans Affect Comput. 2011;2(1):2-9.

Jiang Z, Xu Y, Rossi S, Wang B, Qiu T, Yang W. Emotion recognition from infrared thermal images using deep learning method. Eng Appl Artif Intell. 2022;114:105157.

Kopaczka M, Kolk R, Schock J, Burkhard F, Merhof D. A Thermal Infrared Face Database with Facial Landmarks and Emotion Labels. arXiv [Preprint]. 2020 Sep 22:2009.10589.

Kopaczka M, Nestler J, Merhof D. Face detection in thermal infrared images: A comparison of algorithm- and machine-learning-based approaches. Int J Electron Commun Eng. 2017;5(1):24-35.

Koprowski R, Wilczyński S, Samojedny A, Wróbel Z, Deda A. Image analysis and processing methods in verifying the correctness of performing low-invasive esthetic medical procedures. Biomed Eng Online. 2013;12:51.

Koprowski R. Automatic analysis of the trunk thermal images from healthy subjects and patients with faulty posture. Comput Biol Med. 2015;62:110-8.

Kosonogov V, De Zorzi L, Honoré J, Martínez-Velázquez ES, Nandrino JL, Martinez-Selva JM, et al. Facial thermal variations: A new marker of emotional arousal. PLoS One. 2017;12(9):e0183592.

Lee, J.-M., An, Y.-E., Bak, E., Pan, S. (2022). Improvement of Negative Emotion Recognition in Visible Images Enhanced by Thermal Imaging. *Sustainability*, 14(22), 15200. [Link](https://doi.org/10.3390/su142215200).

NASA. Hubble: Multimedia - Sonifications. National Aeronautics and Space Administration. [Internet]. Available from: https://science.nasa.gov/mission/hubble/multimedia/sonifications/

NASA. Listen to the Universe: New NASA Sonifications and Documentary. National Aeronautics and Space Administration. [Internet]. Available from: https://www.nasa.gov/missions/chandra/listen-to-the-universe-new-nasa-sonifications-and-documentary/

Nguyen, H., Kotani, K., Chen, F., Le, B. (2014). A Thermal Facial Emotion Database and Its Analysis. In: Klette, R., Rivera, M., Satoh, S. (eds) *Image and Video Technology*. PSIVT 2013. Springer, Berlin, Heidelberg. [Link](https://doi.org/10.1007/978-3-642-53842-1_34).

Rönnberg, Niklas. (2021). Sonification for Conveying Data and Emotion. 56-63. 10.1145/3478384.3478387.

Winters M. Sonification of emotion: Strategies and results from the intersection with music. Organised Sound. 2013;18(3):208-216.

Winters RM, Kalra A. Emotional Response to Music through Auditory Display and Sonification: Current Work and Future Directions. Proc Int Conf Auditory Display. 2021;2021:1-8.

Winters RM. Data Sonification for Beginners. Modern Language Association. 2023 Jan 18.